Hofstadter, Penrose, and the "feeling of conscious awareness"

Penrose, Hofstadter, and Escher: three authors who have shaped my views on consciousness.

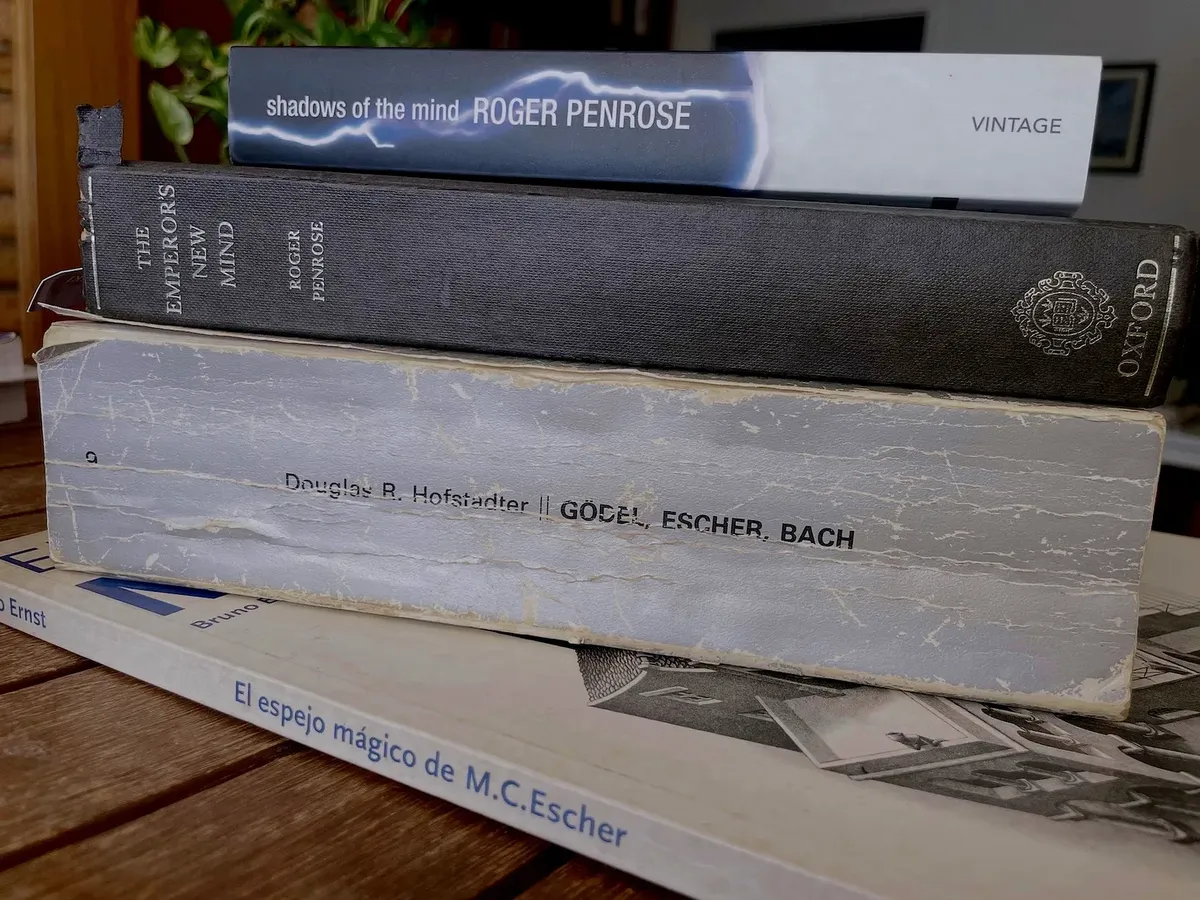

Forty years ago I read two books that marked me deeply: Godel, Escher, Bach by Douglas R. Hofstadter and The Emperor’s New Mind by Roger Penrose. For decades I saw them as almost opposite visions: Hofstadter seemed to stand for the idea that AI would eventually build artificial minds thanks to its command of structure, symbols, loops, and the different levels of language. Penrose, by contrast, argued that an algorithm will never be able to simulate what it feels like to be conscious.

Four decades later, something has happened that forces me to reread them: the rise of language models trained exclusively on text. Without cameras or sensors, these machines learn syntax and capture semantic regularities of use: they speak, summarize, program, argue. They do not solve consciousness, but they do redraw the map: they show that a large part of “linguistic intellect” can be built out of language alone.

Douglas R. Hofstadter

In 1987, when I was in my third year of Computer Science in Alicante, I saw in the 80 Mundos bookstore a huge gray book by an author I knew from the mathematical articles in Investigacion y Ciencia, Douglas R. Hofstadter. I leafed through it and was immediately astonished by Escher’s extraordinary illustrations, the design of an immensely complex text, with dialogues, logical deductions, long quotations, typographic games, computer programs, and so on, and by the number of fascinating topics spread across its nearly 900 pages. It was the Spanish translation of Godel, Escher, Bach (GEB), published by Tusquets.

Reading the book, Hofstadter seemed to align himself with what was then called strong AI, the idea that we will be able to create a program that completely simulates the human mind, including consciousness. Alan Turing, in his famous article Computing Machinery and Intelligence (1950), was one of the first to defend something like that.

I tried to understand Hofstadter’s arguments; still, there were things that did not convince me. Simulate the feeling of consciousness? The feeling of I? The feeling of seeing something red? Can a computer program generate that?

Let us remember that Hofstadter himself explains in the book the important idea, inherited from Turing, that running a program is nothing more than applying a set of discrete rules. There would be no conceptual difference between a microprocessor executing instructions and monks copying zeros and ones onto a paper tape. I could not understand why Hofstadter, or even Turing, did not find this idea absurd. How can they believe that “consciousness” might emerge from the process of erasing and writing zeros and ones on a sheet of paper? What do they see that I do not?

Roger Penrose

This doubt grew a couple of years later, in 1989, when the physicist Roger Penrose published his famous book The Emperor’s New Mind. I bought the English edition the following year, in 1990. I read eagerly through his arguments against strong AI, tried, unsuccessfully, to read all his explanation of quantum mechanics and cosmology, and marveled at his magnificent ink illustrations. Penrose is also an excellent draftsman and, like Hofstadter, an admirer of Escher.

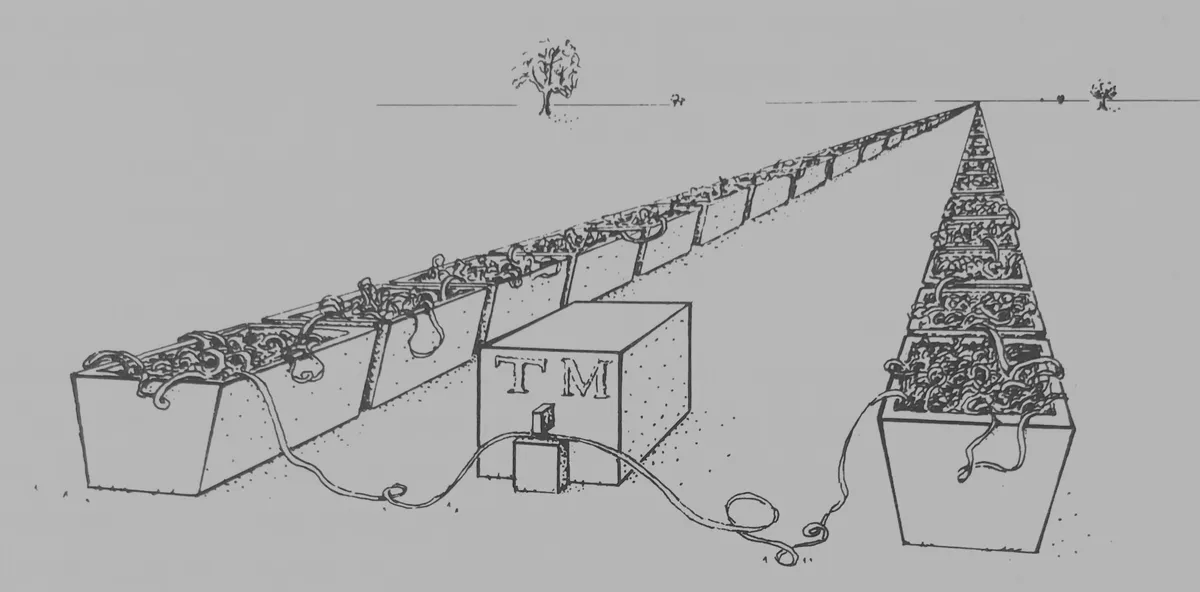

Penrose’s interpretation of a Turing machine processing an infinite tape.

Penrose’s thesis, one that convinced me and that I still believe, is that human consciousness is not algorithmic: it cannot be captured by a conventional Turing machine. In the book he uses, among other things, Godel’s incompleteness theorem. Beyond the details, what stayed with me above all were his criticisms of the possibility of simulating by means of an algorithm the deepest aspects of consciousness, such as awareness or sentience, the feeling of being conscious, of noticing, of perceiving.

M. C. Escher as a connecting point

Penrose and Hofstadter share one thing: admiration for Escher. But each highlights different aspects.

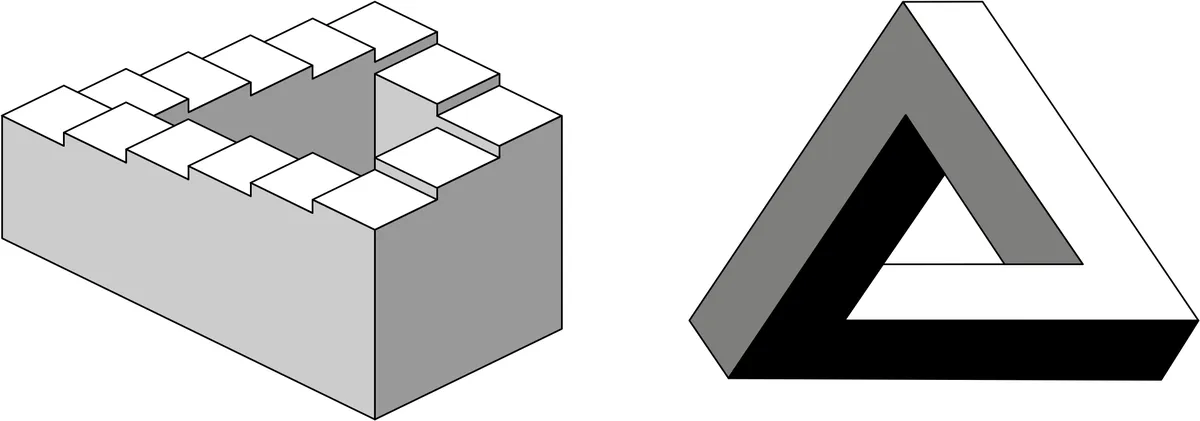

Penrose emphasizes Escher’s visual paradoxes, local consistency that turns into global impossibility: each step makes sense, but the whole violates physical geometry. The Penroses, Roger and his father Lionel, popularized the impossible triangle and the infinite staircase that Escher turned into visual art in Ascending and Descending and Waterfall, metaphors for how apparently innocent discrete rules can produce paradoxes and limits to what is computable.

The infinite staircase and the impossible triangle: two figures devised by Roger Penrose and his father Lionel Penrose.

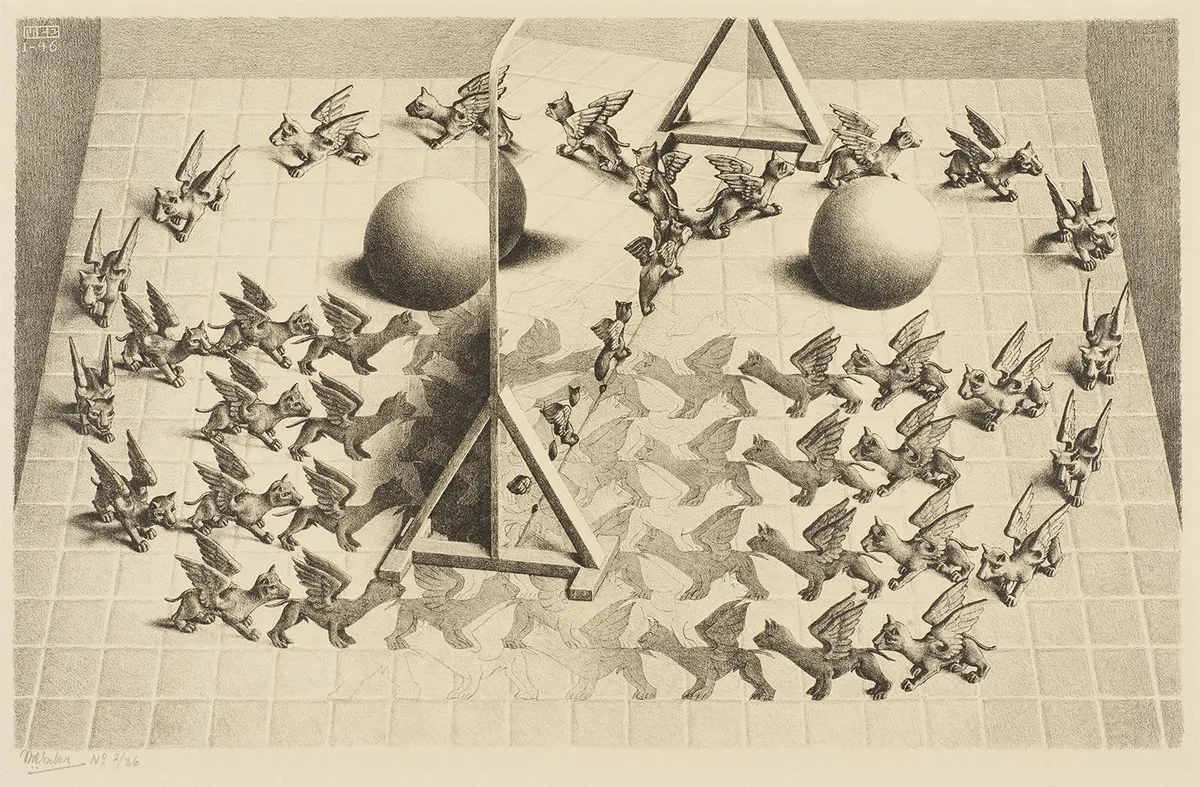

Hofstadter, for his part, emphasizes works such as Drawing Hands, Relativity, and Print Gallery, in which the idea of the strange loop becomes visible: levels that refer to one another with no clear beginning or end. Recursive, self-referential circles that, for Hofstadter, are essential to understanding consciousness and the self.

One image I especially like is Magic Mirror, which combines many of those elements: mirrors, reality and illusion, strange loops, and tessellations. It is a good summary of all the previous concepts.

Escher’s print Magic Mirror (1946), at the Escher in Het Paleis Museum.

A critique of strong AI: the “Einstein book”

One example from Penrose that has always stayed with me is his criticism of Hofstadter’s idea of a book containing Einstein’s mind and with which we can interact by asking it questions. If strong AI is possible, then Einstein’s mind could be simulated. Penrose asked questions that, for me, make the idea absurd:

Would Einstein’s awareness be enacted only when the book is being so examined? Would he be aware twice over if two people chose to ask the book the same question at two completely different times? Or would that entail two separate and temporally distinct instances of the same state of Einstein’s awareness? Perhaps his awareness would be enacted only if the book is changed? […] Or would the book-Einstein remain completely self-aware even if it were never examined or disturbed by anyone or anything? […] What does it mean to activate an algorithm, or to embody it in physical form? Would changing an algorithm be different in any sense from merely discarding one algorithm and replacing it with another? What on earth does any of this have to do with our feelings of conscious awareness?

Hofstadter never really answers these questions: he sidesteps them, without entering into the fundamental problem of conscious awareness.

Four positions, according to Penrose

In Shadows of the Mind (1994) Penrose makes his position more concrete and, before doing so, carefully delimits what he calls “consciousness”:

How do our feelings of conscious awareness -of happiness, pain, love, aesthetic sensibility, will, understanding, etc.- fit into such a computational picture? […]

Penrose stresses feelings of conscious awareness: it is not enough for him to simulate behavior; he is referring to the most fundamental problem of consciousness, the sensation of being awake, of feeling sensations, of experiencing reality.

He then lays out four alternative positions:

It seems to me that there are at least four positions -or extremes of position- that one may reasonably hold regarding the issue:

- All thinking is computation; in particular, feelings of conscious awareness are evoked merely by the carrying out of appropriate computations.

- Awareness is a feature of the brain’s physical action; and whereas any physical action may be simulated computationally, computational simulation cannot by itself evoke awareness.

- Appropriate physical action of the brain evokes awareness, but this physical action cannot even be properly simulated computationally.

- Awareness cannot be explained in physical, computational, or any other scientific terms.

Viewpoint 3 is the one that I think comes closest to the truth.

Position 1 is usually associated with computationalism or functionalism; position 2 with biological naturalism; position 3 might be called non-computational physicalism, there are non-computable physical processes involved in consciousness; and position 4 aligns with idealism or with certain variants of mysterianism, consciousness as something intrinsically inaccessible to science.

Penrose aligns himself with option 3. Laying my cards on the table, I vote for option 4. I believe conscious sensations are something mysterious whose explanation lies, because of their personal and ineffable character, outside the scope of objective scientific explanation. What do you think?

And what did Hofstadter do with feelings?

Let us return to Penrose’s question:

How do our feelings of conscious awareness -of happiness, pain, love, aesthetic sensibility, will, understanding, etc.- fit into such a computational picture? […]

It is striking how carefully he chooses feelings of conscious awareness. He could have said feelings, consciousness, or awareness separately; instead he brings them together and then lists concrete sensations: feelings of conscious awareness of happiness, feelings of conscious awareness of pain, feelings of conscious awareness of will, sensibility, understanding, and so on.

Penrose is not satisfied with a purely functional perspective, the “behaves as if” of the Turing test. He is looking for lived experience, phenomenal experience. If we say that an AI can equal a human being, Penrose demands that it feel as humans feel: a feeling of conscious awareness.

And Hofstadter? Rereading GEB, I do not find a sharp position on feelings. Near the end, in “Intelligence and Emotions”, he tries to pull the two concepts apart. He opens with the scene of a child crying because his balloon has burst, and concludes:

It might be objected that, even if the program “understands” what is said, in an intellectual sense, it will never really understand it until it has cried and cried. And when is a computer ever going to do such a thing? This is the sort of humanistic issue that Joseph Weizenbaum is led to in his book Computer Power and Human Reason; for my part, I think it is an important issue: in fact, a truly profound one. Unfortunately, many AI researchers are at present unwilling to consider this problem seriously. Yet to some extent they are right, for it is a bit premature to concern oneself now with the crying of computers: first, it is necessary to deal with the rules that will allow computers to handle language and other things; in due course we shall confront questions of greater depth.

The emphasis is mine. I find it revealing: Hofstadter separates what is “intellectual”, the rules for dealing with language, from feelings. And that would include, in my opinion, the “feeling of being conscious” that Penrose is talking about.

GEB talks about symbols, meanings, and formal structures: the intellect. Hofstadter considers that to be the fundamental core of our mind. Perhaps that is why he was horrified when he realized that an AI had mastered this side of our behavior.

The plot twist of language models

In the last decade we have seen something surprising: language models (LLMs) trained exclusively on text, with no sensory or motor input, learn to manipulate syntactic structures and to handle semantic regularities of use: they maintain reference in a dialogue, follow complex instructions, summarize, translate, argue, program. All of that without ever having “touched” the world beyond what is implicit in written corpora.

This does not prove anything definitive about consciousness, but it does redraw the map: it shows that a large part of linguistic competence and textual reasoning can emerge from language itself. Much of what we associated with “linguistic intellect” can be learned from text alone.

That does not solve the riddle of feeling, but it does make one thing clearer: speaking, reasoning, and maintaining referential coherence do not by themselves imply having felt anything at all.

A new perspective

With this contemporary lens, I return to Hofstadter and Penrose to better understand what they were really arguing about, and why, perhaps, they were not so far apart.

From Hofstadter’s point of view, language models could be seen as confirmation that symbolic patterns and loops of reference are enough for reasoning. From Penrose’s point of view, they would confirm that mastery of language does not require lived experience.

Almost forty years after my first reading of GEB, rereading it with this perspective is very suggestive. Hofstadter does not address the feeling of being conscious; he talks about symbols and language. Penrose, by contrast, talks about the sensation of being conscious. Perhaps they were not really so opposed: they were arguing about ambiguous words. Each understood “mind” and “consciousness” differently.

In the next article I want to disambiguate the word “consciousness” with a playful typological game: type-1, type-2, and type-3 consciousness.

I will tell that story in a couple of weeks.

See you next time.